Generative AI Models Guide

The road to learning Generative AI starts with Machine Learning algorithms. However, some specialized models are used in Generative AI problems, that you must know if you are aiming for a career in Generative AI. So, in this article, I’ll take you through all the essential Generative AI models you should know.

Generative AI Models Guide

Below are all the essential Generative AI models you should know:

- Generative Adversarial Networks (GANs)

- Transformer Models

- Diffusion Models

- RNNs & LSTMs

Let’s go through these Generative AI models in detail and the resources you can follow to learn about them practically.

Generative Adversarial Networks (GANs)

Generative Adversarial Networks (GANs), introduced by Ian Goodfellow in 2014, are a class of Machine Learning models designed to generate new data instances that resemble your training data. They have become popular due to their applications in image generation, such as deepfakes, artwork generation, and other creative tasks.

GANs consist of two neural networks, a Generator and a Discriminator, that compete against each other. This competition is key to the GAN’s ability to generate realistic outputs. The generator attempts to create fake data while the discriminator evaluates whether the data is real or fake. Over time, the generator gets better at producing realistic data, and the discriminator improves at distinguishing between real and fake data. This process continues until the generator becomes skilled at creating highly realistic outputs.

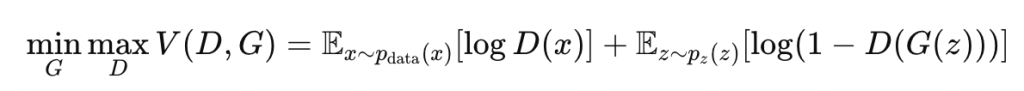

The generator tries to minimize the following loss function while the discriminator tries to maximize it:

Where:

- G(z) is the data generated by the generator.

- D(x) is the probability that x is real data.

Here are the applications of GANs you should know:

- Image Generation: GANs can generate realistic images that are indistinguishable from real images.

- Deepfakes: GANs have been used to generate fake videos of people speaking or performing actions that they didn’t actually do.

- Data Augmentation: GANs can create synthetic data to augment datasets in fields like medical imaging.

Here are the resources you can follow to learn about GANs practically:

Transformer Models

Transformers are behind the success of large language models (LLMs) like GPT, BERT, and T5. They excel at understanding the context of data sequences, such as text, better than previous models like RNNs and LSTMs.

The key innovation in transformers is the self-attention mechanism, which allows the model to focus on different parts of the input data sequence (e.g., a sentence) simultaneously. Unlike RNNs, which process data sequentially, transformers process the entire input at once, which makes them more efficient for long sequences.

The self-attention mechanism for a given input sequence X=(x1,x2,…,xn) is calculated as:

Where:

- Q, K, and V are the Query, Key, and Value matrices derived from the input.

- dk is the dimension of the key vectors.

Here are the applications of transformer models you should know:

- Natural Language Processing (NLP): Transformers are the foundation of state-of-the-art NLP tasks like translation, summarization, and text generation.

- Large Language Models: GPT, BERT, and T5 are transformer-based models that have significantly advanced the capabilities of AI in text generation and understanding.

- Vision Transformers (ViT): Transformers are also being used in image processing tasks by treating images as sequences of patches.

Here are some resources you can follow to learn about transformer models practically:

- Document Analysis using LLMs

- Text Summarization Model with LLMs

- Code Generation Model with LLMs

- Text Generation with LLMs

Diffusion Models

Diffusion models are a class of generative models used for generating images and other complex data types. They work by progressively denoising random noise until a high-quality sample is produced. These models have gained attention in recent years for generating high-resolution images and are competitive with GANs in many tasks.

Diffusion models learn to reverse the process of adding noise to data. They start with random noise and gradually refine this noise step by step to create a clean, high-quality sample.

- Forward Process: Adds noise to the data over several steps, making the data more random.

- Reverse Process: The model learns to reverse the noise addition by generating data step by step from noise.

During training, the reverse process learns to denoise the data step by step.

Here are the applications of diffusion models you should know:

- Image Generation: Diffusion models are used to generate high-resolution and detailed images.

- Text-to-Image Generation: Diffusion models are being explored for tasks where the model generates images based on textual descriptions (e.g., DALL-E).

You can learn about diffusion models practically here: Image Generation using DALL-E.

RNNs & LSTMs

Recurrent Neural Networks (RNNs) and their improved version, Long Short-Term Memory (LSTM) networks, have been widely used for sequence modeling tasks like time series forecasting and natural language processing. Though transformers have taken over many tasks, RNNs and LSTMs are still valuable for certain applications.

RNNs handle sequential data by updating a hidden state as new data comes in. However, RNNs struggle with long-term dependencies because of the vanishing gradient problem. LSTMs, introduced by Hochreiter and Schmidhuber, address this issue by using special memory cells to store long-term information.

RNNs maintain a hidden state that captures information about the sequence but struggles with long-range dependencies. LSTMs use gates (input, forget, output) to decide which information to keep, forget, and output, which enables them to handle long sequences more effectively.

Here are the applications of RNNs & LSTMs you should know:

- Time Series Forecasting: RNNs and LSTMs are used in predicting stock prices, weather forecasting, and other temporal data.

- Speech Recognition: LSTMs can capture the sequential nature of speech signals, which makes them useful for voice-to-text applications.

- Language Modeling: Before transformers, LSTMs were the go-to models for tasks like language translation and text generation.

Here are some resources you can follow to learn about RNNs & LSTMs practically:

Summary

So, below are all the essential Generative AI models you should know:

- Generative Adversarial Networks (GANs)

- Transformer Models

- Diffusion Models

- RNNs & LSTMs