Synthetic Data Generation with Generative AI

Synthetic data is artificially generated data that mimics real-world data. It is created by algorithms, models, or simulations rather than being collected from actual events or real-world scenarios. So, if you want to learn about synthetic data generation using Generative AI, this article is for you. In this article, I’ll take you through the task of synthetic data generation with Generative AI using Python.

Synthetic Data Generation: Getting Started

To get started with the task of Synthetic Data Generation, we need a dataset that we can use to feed into a Generative Adversarial Networks (GANs) model, which will be trained to generate new data samples that will be similar to the original data and the relationships between the features in the original data.

I found an ideal dataset for this task, which contains daily records of insights into app usage patterns over time. Our goal will be to generate synthetic data that mimics the original dataset by ensuring that it maintains the same statistical properties while providing privacy for users’ actual usage behaviour.

You can download the dataset from here.

Synthetic Data Generation using Generative AI

Now, let’s get started with the task of synthetic data generation using Generative AI by importing the necessary Python libraries and the dataset:

import numpy as np

import pandas as pd

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, LeakyReLU, BatchNormalization

from tensorflow.keras.optimizers import Adam

from sklearn.preprocessing import MinMaxScaler

data = pd.read_csv('/content/Screentime-App-Details.csv')

data.head()

The dataset contains the following columns:

- Date: The date of the screentime data.

- Usage: Total usage time of the app (likely in minutes).

- Notifications: The number of notifications received.

- Times opened: The number of times the app was opened.

- App: The name of the app.

To create a Generative AI model using GANs for generating synthetic data, we need to:

- Drop unnecessary columns: We will not generate the Date or App fields as they are specific identifiers. Instead, we’ll focus on Usage, Notifications, and Times opened. In case, you want to use the app column, you can use the app column by converting the value of the column into numerical values.

- Normalize the data: GANs perform better with normalized data, usually between 0 and 1.

- Prepare the dataset for training: Ensure the remaining columns are numeric and ready for the model.

Let’s preprocess the data with all the preprocessing steps we discussed above:

# drop unnecessary columns

data_gan = data.drop(columns=['Date', 'App'])

# initialize a MinMaxScaler to normalize the data between 0 and 1

scaler = MinMaxScaler()

# normalize the data

normalized_data = scaler.fit_transform(data_gan)

# convert back to a DataFrame

normalized_df = pd.DataFrame(normalized_data, columns=data_gan.columns)

normalized_df.head()

The dataset has been normalized, with values between 0 and 1 for the following columns: Usage, Notifications, and Times opened. Now, let’s move on to building the GAN model.

Using GANs to Build a Generative AI Model for Synthetic Data Generation

Here’s the process to define and train the GAN:

- The generator will be trained to produce data similar to the normalized Usage, Notifications, and Times opened columns.

- The discriminator will be trained to distinguish between the real and generated data.

- Next, we will alternate between training the discriminator and the generator. The discriminator will be trained to classify real vs fake data, and the generator will be trained to fool the discriminator.

Let’s start building the GAN. The generator will take a latent noise vector as input and generate a synthetic sample similar to the data. Use the LeakyReLU activation for better gradient flow:

latent_dim = 100 # size of the random noise vector

latent_dim = 100 # latent space dimension (size of the random noise input)

def build_generator(latent_dim):

model = Sequential([

Dense(128, input_dim=latent_dim),

LeakyReLU(alpha=0.01),

BatchNormalization(momentum=0.8),

Dense(256),

LeakyReLU(alpha=0.01),

BatchNormalization(momentum=0.8),

Dense(512),

LeakyReLU(alpha=0.01),

BatchNormalization(momentum=0.8),

Dense(3, activation='sigmoid') # output layer for generating 3 features

])

return model

# create the generator

generator = build_generator(latent_dim)

generator.summary()Model: "sequential"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━┓

┃ Layer (type) ┃ Output Shape ┃ Param # ┃

┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━┩

│ dense (Dense) │ (None, 128) │ 12,928 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ leaky_re_lu (LeakyReLU) │ (None, 128) │ 0 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ batch_normalization │ (None, 128) │ 512 │

│ (BatchNormalization) │ │ │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ dense_1 (Dense) │ (None, 256) │ 33,024 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ leaky_re_lu_1 (LeakyReLU) │ (None, 256) │ 0 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ batch_normalization_1 │ (None, 256) │ 1,024 │

│ (BatchNormalization) │ │ │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ dense_2 (Dense) │ (None, 512) │ 131,584 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ leaky_re_lu_2 (LeakyReLU) │ (None, 512) │ 0 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ batch_normalization_2 │ (None, 512) │ 2,048 │

│ (BatchNormalization) │ │ │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ dense_3 (Dense) │ (None, 3) │ 1,539 │

└──────────────────────────────────────┴─────────────────────────────┴─────────────────┘

Total params: 182,659 (713.51 KB)

Trainable params: 180,867 (706.51 KB)

Non-trainable params: 1,792 (7.00 KB)

Here’s an example of generating data using the generator network:

# generate random noise for 1000 samples

noise = np.random.normal(0, 1, (1000, latent_dim))

# generate synthetic data using the generator

generated_data = generator.predict(noise)

# display the generated data

generated_data[:5] # show first 5 samples32/32 ━━━━━━━━━━━━━━━━━━━━ 2s 12ms/step

array([[0.6393597 , 0.6355918 , 0.4789082 ],

[0.52850807, 0.57966447, 0.5283493 ],

[0.5063401 , 0.5799302 , 0.50868165],

[0.5673947 , 0.55326885, 0.4979822 ],

[0.6030944 , 0.5939912 , 0.47178763]], dtype=float32)

Now, the discriminator will take a real or synthetic data sample and classify it as real or fake:

def build_discriminator():

model = Sequential([

Dense(512, input_shape=(3,)),

LeakyReLU(alpha=0.01),

Dense(256),

LeakyReLU(alpha=0.01),

Dense(128),

LeakyReLU(alpha=0.01),

Dense(1, activation='sigmoid') # output: 1 neuron for real/fake classification

])

model.compile(loss='binary_crossentropy', optimizer=Adam(), metrics=['accuracy'])

return model

# create the discriminator

discriminator = build_discriminator()

discriminator.summary()Model: "sequential_1"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━┓

┃ Layer (type) ┃ Output Shape ┃ Param # ┃

┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━┩

│ dense_4 (Dense) │ (None, 512) │ 2,048 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ leaky_re_lu_3 (LeakyReLU) │ (None, 512) │ 0 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ dense_5 (Dense) │ (None, 256) │ 131,328 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ leaky_re_lu_4 (LeakyReLU) │ (None, 256) │ 0 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ dense_6 (Dense) │ (None, 128) │ 32,896 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ leaky_re_lu_5 (LeakyReLU) │ (None, 128) │ 0 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ dense_7 (Dense) │ (None, 1) │ 129 │

└──────────────────────────────────────┴─────────────────────────────┴─────────────────┘

Total params: 166,401 (650.00 KB)

Trainable params: 166,401 (650.00 KB)

Non-trainable params: 0 (0.00 B)

Next, we will freeze the discriminator’s weights when training the generator to ensure only the generator is updated during those training steps:

def build_gan(generator, discriminator):

# freeze the discriminator’s weights while training the generator

discriminator.trainable = False

model = Sequential([generator, discriminator])

model.compile(loss='binary_crossentropy', optimizer=Adam())

return model

# create the GAN

gan = build_gan(generator, discriminator)

gan.summary()Model: "sequential_2"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━┓

┃ Layer (type) ┃ Output Shape ┃ Param # ┃

┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━┩

│ sequential (Sequential) │ (None, 3) │ 182,659 │

├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤

│ sequential_1 (Sequential) │ (None, 1) │ 166,401 │

└──────────────────────────────────────┴─────────────────────────────┴─────────────────┘

Total params: 349,060 (1.33 MB)

Trainable params: 180,867 (706.51 KB)

Non-trainable params: 168,193 (657.00 KB)

Now, we will train the GAN using the following steps:

- Generate random noise.

- Use the generator to create fake data.

- Train the discriminator on both real and fake data.

- Train the generator via the GAN to fool the discriminator.

def train_gan(gan, generator, discriminator, data, epochs=10000, batch_size=128, latent_dim=100):

for epoch in range(epochs):

# select a random batch of real data

idx = np.random.randint(0, data.shape[0], batch_size)

real_data = data[idx]

# generate a batch of fake data

noise = np.random.normal(0, 1, (batch_size, latent_dim))

fake_data = generator.predict(noise)

# labels for real and fake data

real_labels = np.ones((batch_size, 1)) # real data has label 1

fake_labels = np.zeros((batch_size, 1)) # fake data has label 0

# train the discriminator

d_loss_real = discriminator.train_on_batch(real_data, real_labels)

d_loss_fake = discriminator.train_on_batch(fake_data, fake_labels)

# train the generator via the GAN

noise = np.random.normal(0, 1, (batch_size, latent_dim))

valid_labels = np.ones((batch_size, 1))

g_loss = gan.train_on_batch(noise, valid_labels)

# print the progress every 1000 epochs

if epoch % 1000 == 0:

print(f"Epoch {epoch}: D Loss: {0.5 * np.add(d_loss_real, d_loss_fake)}, G Loss: {g_loss}")

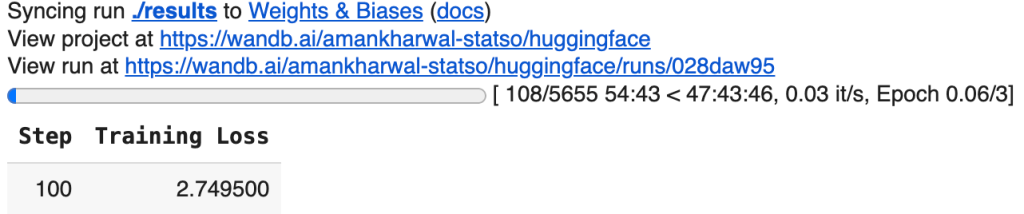

train_gan(gan, generator, discriminator, normalized_data, epochs=10000, batch_size=128, latent_dim=latent_dim)Streaming output truncated to the last 5000 lines. 4/4 [==============================] - 0s 2ms/step 4/4 [==============================] - 0s 2ms/step 4/4 [==============================] - 0s 2ms/step 4/4 [==============================] - 0s 2ms/step 4/4 [==============================] - 0s 2ms/step 4/4 [==============================] - 0s 2ms/step 4/4 [==============================] - 0s 2ms/step

...

4/4 [==============================] - 0s 2ms/step

4/4 [==============================] - 0s 2ms/step

4/4 [==============================] - 0s 2ms/step

4/4 [==============================] - 0s 2ms/step

Now, here’s how we can use the generator to create new synthetic data:

# generate new data

noise = np.random.normal(0, 1, (1000, latent_dim)) # generate 1000 synthetic samples

generated_data = generator.predict(noise)

# convert the generated data back to the original scale

generated_data_rescaled = scaler.inverse_transform(generated_data)

# convert to DataFrame

generated_df = pd.DataFrame(generated_data_rescaled, columns=data_gan.columns)

generated_df.head()

Summary

In this article, we explored the task of synthetic data generation with Generative AI using Generative Adversarial Networks (GANs). We started by preprocessing a dataset of app usage insights by focusing on features like Usage, Notifications, and Times opened, which were normalized for GAN training. The GAN architecture was built with a generator to create synthetic data and a discriminator to distinguish between real and generated data.