Recommendation System using Python and TensorFlow

Recommendation systems are the invisible engine behind the success of platforms like Netflix, Amazon, Spotify, and YouTube. They personalize your experience by suggesting what to watch, buy, or listen to next. In this hands-on tutorial, you’ll learn to build a real-world recommendation system using Python and TensorFlow.

Recommendation System using Python and TensorFlow

We’ll use a real Netflix dataset containing titles, content types, languages, and viewing hours. By the end, you’ll have a deep learning model that can answer questions like: If someone liked Wednesday, what else might they enjoy?

Step 1: Load and Understand the Dataset

We’re using a Netflix 2023 dataset with the following fields:

- Title

- Available Globally?

- Release Date

- Hours Viewed

- Language Indicator

- Content Type

You can download the dataset from here. Let’s load the data and move forward:

import pandas as pd

df = pd.read_csv("/content/netflix_content_2023.csv")

df.head()

This data is rich for content-based filtering, even without user behaviour data.

Step 2: Clean and Preprocess the Data

Before modelling, we need to convert the data into a numerical format. So, let’s clean and preprocess the data:

df['Hours Viewed'] = df['Hours Viewed'].str.replace(',', '', regex=False).astype('int64')

# drop rows with missing titles or duplicate titles

df.dropna(subset=['Title'], inplace=True)

df.drop_duplicates(subset=['Title'], inplace=True)

# create simple content IDs for TensorFlow embeddings

df['Content_ID'] = df.reset_index().index.astype('int32')

# encode 'Language Indicator' and 'Content Type'

df['Language_ID'] = df['Language Indicator'].astype('category').cat.codes

df['ContentType_ID'] = df['Content Type'].astype('category').cat.codes

df[['Content_ID', 'Title', 'Hours Viewed', 'Language_ID', 'ContentType_ID']].head()

TensorFlow doesn’t work with strings; it needs numbers. So, we converted content metadata into categorical encodings for use in embeddings.

Step 3: Build a Neural Recommendation Model Using TensorFlow

We will use embeddings to capture complex relationships between features like language, type, and content ID:

import tensorflow as tf

from tensorflow.keras import layers, Model

num_contents = df['Content_ID'].nunique()

num_languages = df['Language_ID'].nunique()

num_types = df['ContentType_ID'].nunique()

content_input = layers.Input(shape=(1,), dtype=tf.int32, name='content_id')

language_input = layers.Input(shape=(1,), dtype=tf.int32, name='language_id')

type_input = layers.Input(shape=(1,), dtype=tf.int32, name='content_type')

content_embedding = layers.Embedding(input_dim=num_contents+1, output_dim=32)(content_input)

language_embedding = layers.Embedding(input_dim=num_languages+1, output_dim=8)(language_input)

type_embedding = layers.Embedding(input_dim=num_types+1, output_dim=4)(type_input)

content_vec = layers.Flatten()(content_embedding)

language_vec = layers.Flatten()(language_embedding)

type_vec = layers.Flatten()(type_embedding)

combined = layers.Concatenate()([content_vec, language_vec, type_vec])

x = layers.Dense(64, activation='relu')(combined)

x = layers.Dense(32, activation='relu')(x)

output = layers.Dense(num_contents, activation='softmax')(x)

model = Model(inputs=[content_input, language_input, type_input], outputs=output)

model.compile(optimizer='adam', loss='sparse_categorical_crossentropy', metrics=['accuracy'])Embeddings compress high-dimensional categorical data (like content IDs or languages) into dense vectors where similar values cluster together. It will allow our model to learn which content is similar.

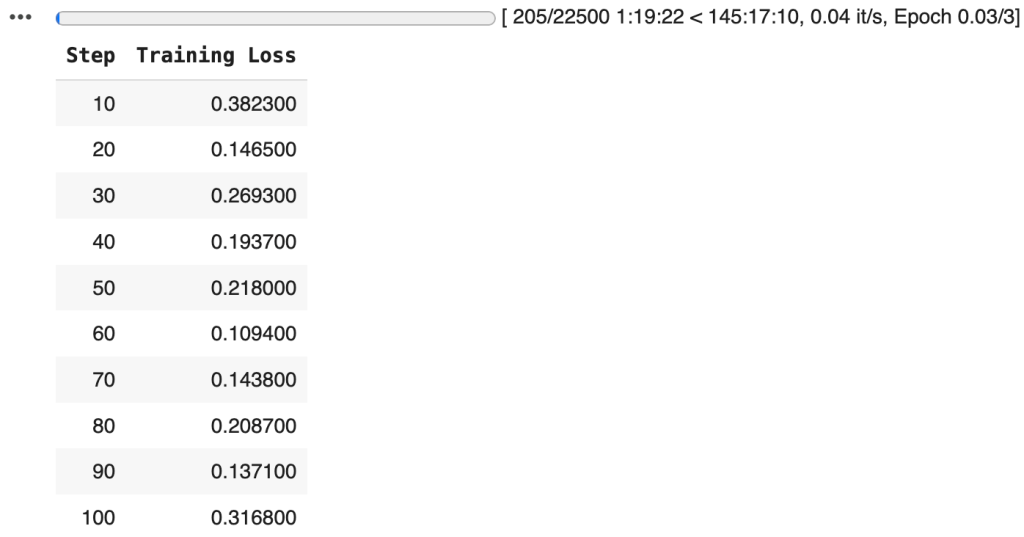

Step 4: Train the Recommendation Model

We’ll use the content itself as the label so the model learns to predict content from its features. This is a self-supervised learning approach:

model.fit(

x={

'content_id': df['Content_ID'],

'language_id': df['Language_ID'],

'content_type': df['ContentType_ID']

},

y=df['Content_ID'],

epochs=5,

batch_size=64

)Epoch 1/5

300/300 ━━━━━━━━━━━━━━━━━━━━ 14s 41ms/step - accuracy: 0.0000e+00 - loss: 9.8788

Epoch 2/5

300/300 ━━━━━━━━━━━━━━━━━━━━ 19s 37ms/step - accuracy: 0.0000e+00 - loss: 9.8650

Epoch 3/5

300/300 ━━━━━━━━━━━━━━━━━━━━ 10s 34ms/step - accuracy: 0.0014 - loss: 9.6823

Epoch 4/5

300/300 ━━━━━━━━━━━━━━━━━━━━ 21s 38ms/step - accuracy: 0.0067 - loss: 8.4410

Epoch 5/5

300/300 ━━━━━━━━━━━━━━━━━━━━ 11s 36ms/step - accuracy: 0.0999 - loss: 6.5097

It structures the embedding space based on real metadata. Similar content will have similar embeddings. It will allow recommendations based on closeness in vector space.

Step 5: Recommend Similar Content

Once the model is trained, you can input any show/movie and get a list of similar titles. Here’s how:

import numpy as np

def recommend_similar(content_title, top_k=5):

content_row = df[df['Title'].str.contains(content_title, case=False, na=False)].iloc[0]

content_id = content_row['Content_ID']

language_id = content_row['Language_ID']

content_type_id = content_row['ContentType_ID']

predictions = model.predict({

'content_id': np.array([content_id]),

'language_id': np.array([language_id]),

'content_type': np.array([content_type_id])

})

top_indices = predictions[0].argsort()[-top_k-1:][::-1]

recommendations = df[df['Content_ID'].isin(top_indices)]

return recommendations[['Title', 'Language Indicator', 'Content Type', 'Hours Viewed']]

recommend_similar("Wednesday")

The embeddings map each content item into a 32-dimensional space. Items that are closer in this space are likely to be similar in:

- Language

- Content Type

- Viewership Pattern

So, even without user feedback, your model can say: “Hey, these titles are kind of alike.”

Final Words

With just content features and deep learning, you’ve now built a powerful, user-independent recommendation system using TensorFlow. This not only showcases your ability to work with embeddings and real-world data but also lays the foundation for building smarter, scalable, and personalized AI systems, just like the ones used by Netflix and Amazon.